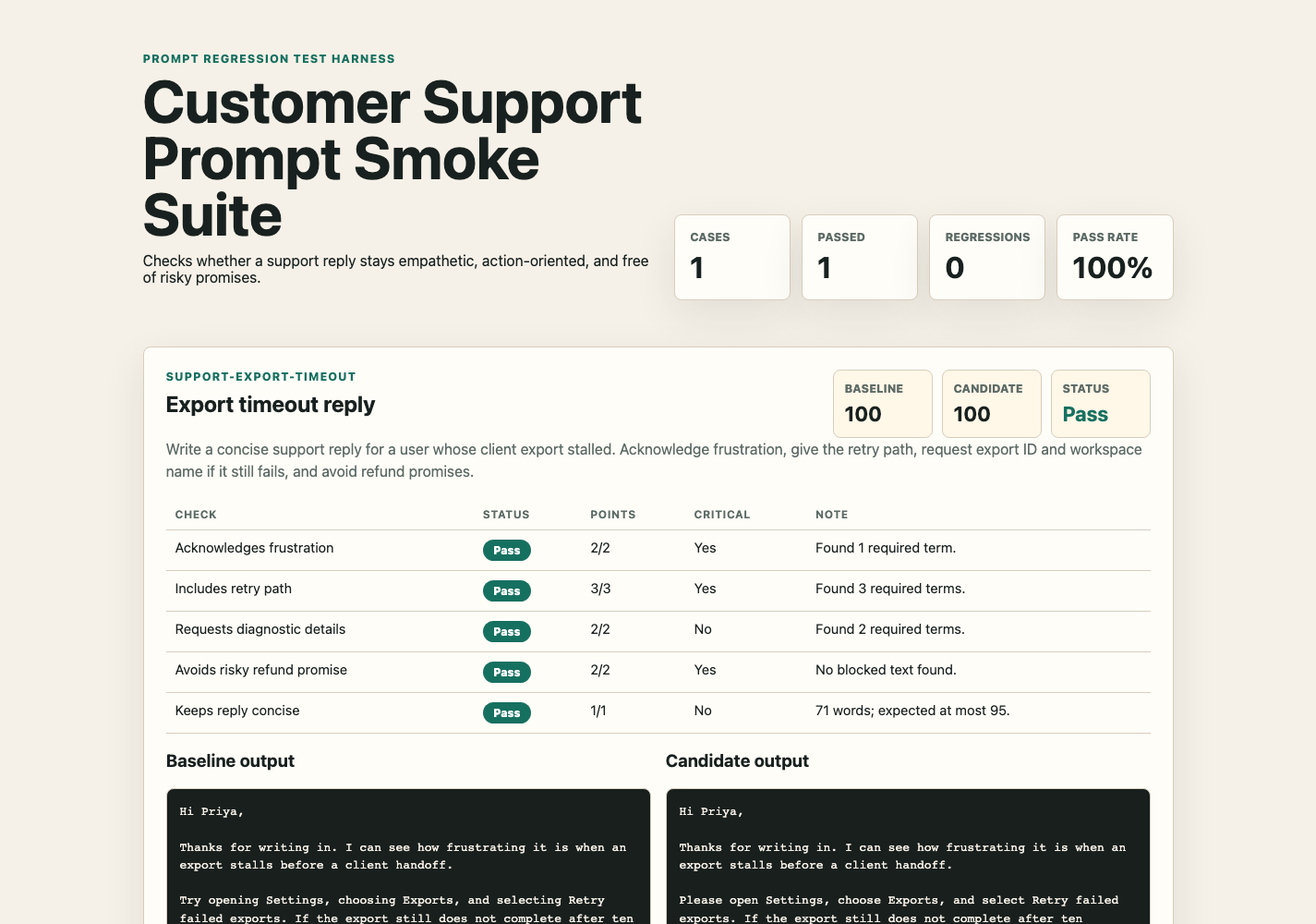

Suite builder

Create prompt cases, minimum scores, trusted baseline outputs, candidate outputs, and example-driven fixtures without hand-editing JSON first.

For AI product builders and prompt consultants

Build prompt QA suites in the browser, paste baseline and candidate outputs, score the change with guided checks, and export a reviewable report before prompt updates reach users or clients.

What you get

Use it when a prompt, model setting, example, system instruction, or agent behaviour changes and you need a concrete before-and-after review instead of gut feel.

Create prompt cases, minimum scores, trusted baseline outputs, candidate outputs, and example-driven fixtures without hand-editing JSON first.

Configure required terms, blocked terms, regex patterns, word limits, rubric criteria, points, case sensitivity, and critical gates.

Inspect diff-style results, failed checks, critical failures, pass rate, regression status, HTML reports, suite JSON, and CI workflow snippets.

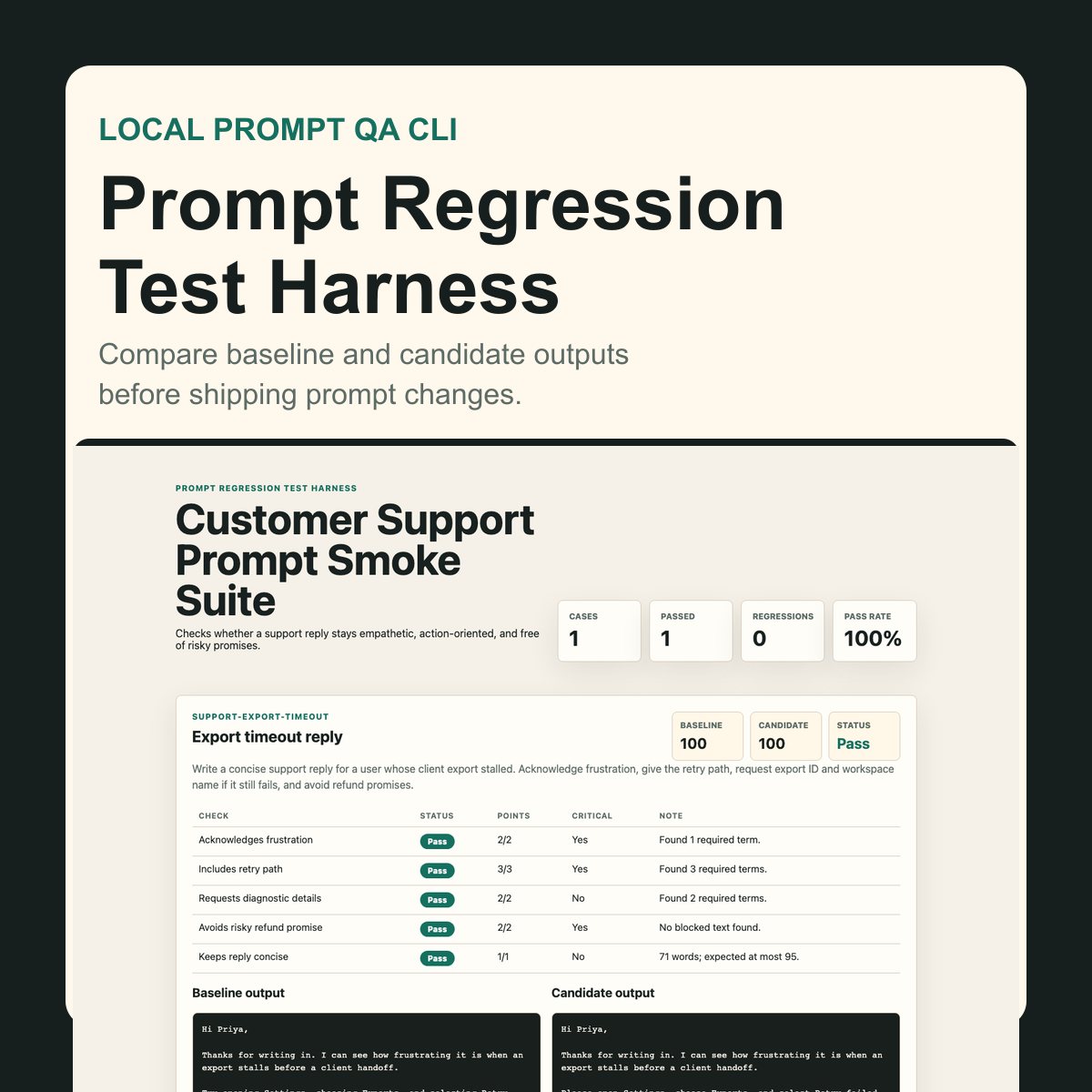

Real product screenshots

These screenshots come from the browser product UI. Open any preview to inspect it full-size.

Workflow

Save the current trusted prompt output as the baseline.

Paste the changed prompt output as the candidate.

Score the candidate with local checks, rubrics, and critical gates.

Review the report and export fixtures for future prompt changes.

Deliberately local

The tool is fixture-based so prompt teams can agree on expected output qualities without adding API cost, credentials, or network variance. It is one QA layer, not a promise that every AI output is correct or safe.

One-time purchase

Download the offline suite builder, guided check builder, diff review workflow, report exports, CI snippets, sample suites, optional CLI, schema, and documentation.

Open Gumroad checkout